Replies: 11 comments 22 replies

-

|

Hi! That could for sure lead to a better search experience. I've already wished on some occasions that I could search for a logger using only its class name and not its fully qualified classified class name. On the other hand, I'm pretty sure I'd like to keep the ability to search for a fully qualified class name. If that helps, I'm not sure preserving the dot itself if mandatory, though: I guess as long as searching for |

Beta Was this translation helpful? Give feedback.

-

|

I've protoyped a change like that in the tokeninzer config in the latest dev branch: https://github.com/binjr/binjr/tree/v3.12.0-dev I've spent a little bit of time and so far I like much better than the previous behaviour. That said, I made that change specifically to help me with what I've been working on at the moment, so of course my own feedback is quite biased, so I'd really like to get you opinion on this. I'm just worried I'm going to hate it if I have to deal with a lot of IP addresses at some point... |

Beta Was this translation helpful? Give feedback.

-

It's not really Lucene per se that makes it hard to look for exact substrings, but rather the way field tokenization is generally configured; so for instance to match everything that contains "Feb 20 19:3", you could use its regex syntax, something like Changing the default tokenization to satisfy that particular need would be detrimental to the more common case of looking for a single word. But that gave me an idea, though: we could add an extra field that index the whole line as single token to perform regex (or any other sub query that would benefit from that). @Arkanosis w.d.y.t? If find how to do a prefix handler in lucene, we could even take out the ceremonial Originally posted by @fthevenet in #123 (comment) |

Beta Was this translation helpful? Give feedback.

-

|

I've had experience with Atlassian's Confluence (which uses Lucene), and tokenization drives me bonkers. :-/ Basically, when I want to search for an ip address or hostname, it finds pages that don't have the desired string, but do have some of the tokens. E.g. If I look for "jenkins.example.com", it finds pages with "jenkins.example.com" (good), but also pages with just "example" or "com" or "example.com" (bad). Since Example.com is my company's domain, there's a very high number of pages that have "example.com" on them, and even more with just "com", making the search results just about worthless. So my advice there is to have a secondary search: Do the Lucene search first, and then filter the results it gives you using a slower but more precise string search. I do this with grep and awk: if I process a large logfile with awk, it'll take minutes, but if I filter it with grep first and feed that much-smaller result to awk, it takes seconds and gives the same results. The difference is that grep is highly optimized for searching strings, whereas awk has to be good at being a generalized language. |

Beta Was this translation helpful? Give feedback.

-

|

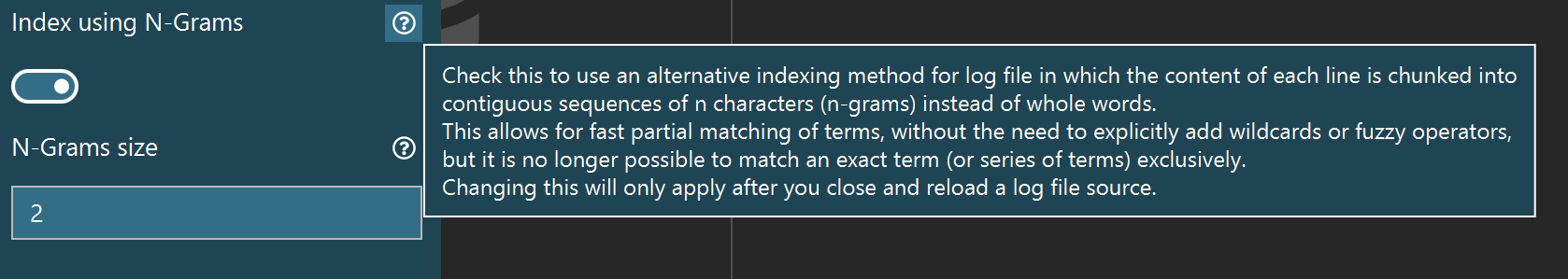

Hello! So I have finally come up with a prototype for indexing log files using ngrams instead of word-based tokens in binjr (it took a while because as I suspected it took me some time to figure out how to do it but mostly because it made an existing bug in Lucene a lot more visible and I had to fix that first). But first a quick word to maybe recap the discussion so far; when indexing log files, binjr currently split the text for each line in the log file trying to identify what looks like words and uses these so-called tokens as entries in the index for the line in question.

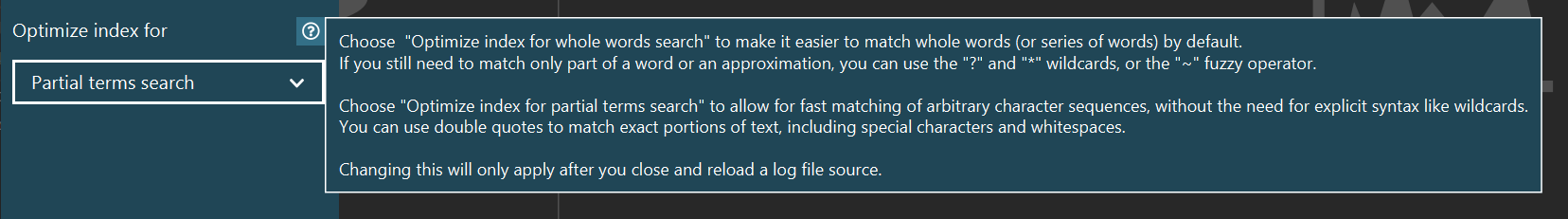

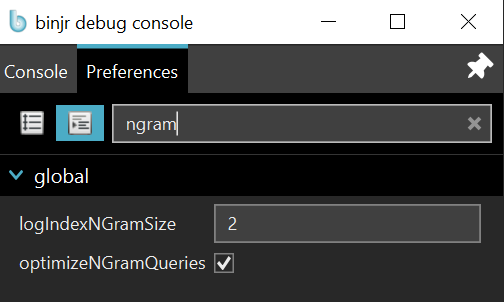

This indexing strategy could possibly make for more convenient and intuitive way to filter out log file content and so it is available as an option in binjr and can be tried out right now in a preview for the next version available here: https://github.com/binjr/binjr/releases/tag/v3.12.0-SNAPSHOT To activate the feature, go to the settings panel, in the "Logs" section and selecting "Index using N-Grams" There is an extra settings where you can choose the size of the ngrams (i.e. the value for n); it is set by default to 2 and I have found it a good compromise so far, but you're welcome to experiment with different values. |

Beta Was this translation helpful? Give feedback.

-

|

Using n-grams indexing in binjr is not necessarily a silver bullet, however, and it comes with a few trade-offs.

While indexing times are largely similar without the anti-virus (and slightly longer for n-grams with the A/V on) the impact on the index size is very significant: ~4x bigger when indexing with n-grams vs indexing words (notably, index size is half the size of raw text input in word mode, while it is twice as large in ngrams mode). All in all this is not a show stopper but definitely something users need to be aware of when using binjr on large log files, as they are more likely to run of temp space using ngrams. |

Beta Was this translation helpful? Give feedback.

-

|

Hello! Has anyone had the chance to try the new indexing mode? Thanks! |

Beta Was this translation helpful? Give feedback.

-

|

Thanks for taking the time to try it out! 🙏

I'll start with that one as it is the most concerning to me. First thing to do is to make sure that you were using the 9.6-SNAPSHOT build for Lucene, as this is the one that supposedly fixes the bug. If you're using one of the pre-built package available here: https://github.com/binjr/binjr/releases/tag/v3.12.0-SNAPSHOT, that should be the case. If you built it yourself... it should also be the case, but you might want to double check there isn't a copy of a previous version accessible on the classpath. Once you're confident you're using the right version, if the issue still happens it would be simpler if you can send me the elements for a reproducer. There's a pretty big chance the the problem lies in my own code, as I'm doing some custom query rewriting so that is is possible to generate the ngrams for the user submitted terms while still leveraging the standard query parser to support things like boolean operators. And of course the probability that this is another, unrelated Lucene bug is non-zero 🤷 |

Beta Was this translation helpful? Give feedback.

-

Right now, it isn't possible to implement in binjr, as the type of tokenizer is set once and for all when the IndexWriter instance is built, and it is assumed that an index is only built and updated by a single IndexWriter. That is not to say it would be impossible to ever implement it, but that is certainly more work than it looks. |

Beta Was this translation helpful? Give feedback.

-

Well, you can't implement regular expressions on top of a field whose content has been split into n-grams, or at least I don't see how. What can be done is to also index each line in a separate field without any tokenization of any sorted, and apply regexs on that: I've done that in a POC and that works. The drawback is that this would further incread the index size (by about the raw text size mitigated by compression used) and it is unclear how well that would scale a across large-ish index. At any rate, this would not used the ngram field at all. With regard to your second point, what I gather is that you really want, is to be able to make a query with the term The bad news is that you cannot do that with n-grams (unless there's something that eludes me). The good news is, however, that you could search for I'm really not sure about that said logic though and I've considered changing it before; it might be time to do so! |

Beta Was this translation helpful? Give feedback.

-

|

All in all, I'm pretty tempted to make this new mode the default, especially with the latest change in behaviour for quoted terms. Also, I'm considering removing the ngram size option from the settings panel: I don't see much point in using anything other than bi-gram, as the increase in index size is minimal and it allows for a smaller minimum term size. It might have an impact at search time as it increases the number of terms produced in the end query for larger user-supplied words, but I'm doubtful this would ever really be noticeable (I haven't noticed it, at least), given the fact that even very large logs files only make for rather small search corpora, and we're only ever serve a single user at a time. WDYT? |

Beta Was this translation helpful? Give feedback.

-

Hi!

I'm considering a change in the way lines of log files are tokenized when added as documents in the lucene index backend.

We're currently using the built-in

StandardAnalizer, which has the following notable properties:This is an interesting property as it allows to search for IP addresses or URL as single tokens, but if makes looking for things in lines of codes difficult.

For instance:

It is not possible to easily filter all lines containing

interestingBits, since this string is part of tokens that start with something that varies (a, b, c, x, y, z). And wildcards will not help here, because lucene does not allow a query to start with one (i.e.*foois illegal).Beta Was this translation helpful? Give feedback.

All reactions